AI detection is dead. Long live authentication.

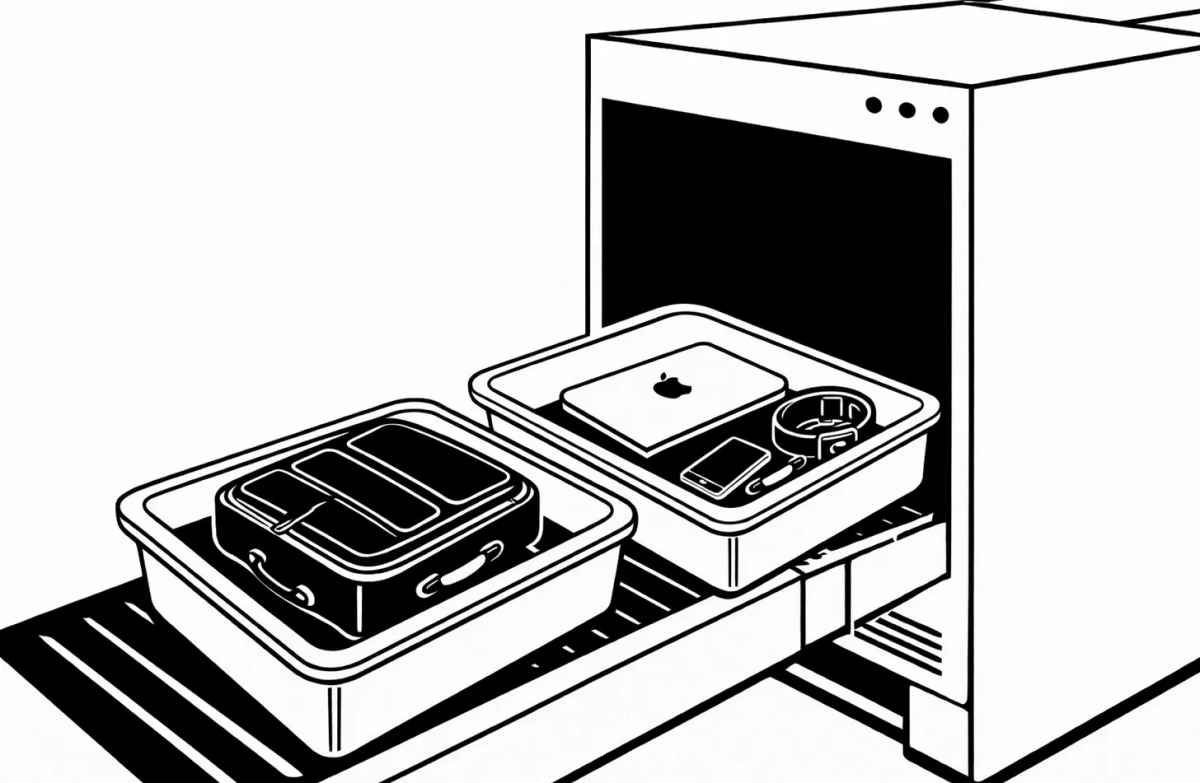

I came through airport security yesterday. He let me keep my flip-flops on, but he was determined to have my fabric belt and watch off before I dutifully laid out my laptop, chargers, liquids, glasses, coins, and mints in the tray, raised my arms to the correct angles in the body scanner, got swiped and then given the nod to confirm I was not a threat today.

I found a good-enough coffee in the departure lounge and sat down to doom-scroll the clock down. Somewhere around the second sip, I realised I had forgotten to move several items into my hold luggage. Things that should not, by the logic of the system, and the many machines and people I had just cleared, have made it through the gate. I had my usual moment of panic at the thought of being caught doing the wrong thing, and then quickly got over myself. I wasn’t going to turn myself in.

Thanks for reading! Subscribe for free to receive new posts and support my work.

The system had been satisfied. I hadn’t been caught, not because I was any kind of threat, but because the system was not designed to catch everything. It was designed to look like it would catch everything. Bruce Schneier, a security technologist, called this security theatre: measures that make people feel safer without making them safer, visible rituals that reassure the public, satisfy the risk assessors, and produce a veneer of safety, whilst determined adversaries or absent-minded travellers (like me) find their way around them without particular difficulty.

I thought about detection systems all the way down to the gate.

And later, when I was finally settled on the flight, I started thinking about the detection systems we use to protect against plagiarism.

One day, everyone will say that we always knew about the fallacy of trying to detect plagiarism in the age of generative AI, that the tools we bought were theatre, that the students were always a step ahead, and that we kept the software running anyway because the appearance of accountability was easier to defend than the absence of it.

I think that day is now.

I think schools should stop using software tools to detect plagiarism.

I don’t say this lightly, as I know that there are polarising and shifting views on this. Many schools are wedded to detection, and many others have already moved on. For a long time, I have defended plagiarism detection tools because I thought they were the fairest way to treat all students who did not plagiarise.

However, I no longer think it protects fairness. I think it actually does the opposite. I think we need to turn them off.

Here are five reasons why:

#1

It doesn’t work.

Newton said that for every action there is an equal and opposite reaction. So, whether you want to hear it or not, for every tool to detect plagiarism or generative AI, there is another designed to defeat it. This might not have been the case a couple of years ago, but here we are.

Tools that defeat detection can be free, require no login, and take thirty seconds. Your students are using them. Some well-known ones include BypassGPT, Phrasly, Rewritify, and TextGuard — all openly marketed and extensively reviewed. TextGuard has over 1,300 reviews on Trustpilot and a 4.5-star rating. One reviewer shares that it is“an invaluable tool that no student should go without.” Read the reviews yourself. Your students already have.

One of mine mentioned this last week as a mildly general point of interest. There was no embarrassment or lowering of the voice. The system is being openly gamed, and the people running it are the last ones left in the building who seem to know about it.

Independent studies have found detection accuracy collapsing below 30%, and under 5% with simple paraphrasing. Turnitin itself acknowledges a variance of plus or minus fifteen percentage points, which means a 50% AI score could legitimately fall anywhere between 36% and 65%. I don’t think a confident conclusion about any individual student’s work can really be justifiable on that basis.

Yale, Northwestern and UCL (and many, many others) have already disabled or banned AI detection tools. These are not peripheral institutions hedging their bets. They have looked at the evidence and reached a verdict: turn it off.

#2

It is protecting the wrong thing.

For me, the essay was never really about the essay. These tasks were just proxies for productive struggle, a way of forcing students to organise their incomplete thinking, to solve the gap between what they understood and what they needed to say, to revise, reconsider, and arrive somewhere they hadn’t been when they started. The evidence was the product of this struggle, and the process was the education. Using AI steals the struggle, and with it, the learning.

A student told me recently (with a smile) that her friend hadn’t really written her Extended Essay. She’d used Claude, then run it through a humaniser tool, and then submitted something she described as “basically fine.” The detection system was satisfied, and her friend ended up getting a good mark even though she knew she’d learned almost nothing, and was, sadly, happy to tell others about it.

Detection cannot see any of that. It looks at the final product and tries to determine whether a human wrote it. It has no way to measure the struggle that is absent, the understanding that did not develop, the capability that was not built. A fabricated piece of work can pass every detector on the market and still make it onto the plane.

Personally, I don’t think the fraud is the AI-generated text. The fraud is the absence of any productive struggle. And our detection is currently pointing in the wrong direction.

#3

It is systemically unfair.

Consider who ends up getting caught. It’s rarely the student whose friends or parents know about the anti-detection tools, or whose older sibling might have passed the knowledge down. The student who gets caught is the one who didn’t know and submitted it unprocessed because no one told them how to doctor it.1

So this is not just about cheating. It is about information asymmetry. Good students with no prior concerns are vulnerable to being flagged because they don’t know to run it through a humaniser tool first. Whilst down the corridor, savvy students who do know can collect their clean results and move on.

This knowledge flows through networks of advantage, through siblings, friends, and Reddit groups who have been through the system and who just want to launder the love.

Detection doesn’t solve the problem. Rather, it helps to perpetuate it. We need to protect productive struggle, not detection.

#4

It is structurally biased.

The systemic problem I shared above is about who knows. But the structural problem is different and, in fact, more disturbing for me because it has nothing to do with what a student knows or does not know. It is built into the algorithm itself.

A Stanford study found that AI detectors misclassified an average of 61% of TOEFL essays written by non-native English speakers as AI-generated. 89 of 91 essays were flagged.2

Ouch. That’s probably not what the detection tools were designed to catch.

When a student writes in their second or third language, they have to work harder than the student beside them to produce work that is coherent, cogent, and clear. You can see the effort in the work. And the detector flags it because polished non-native prose looks a lot like AI to an algorithm trained predominantly on native English writing. Researchers have unearthed an irony whereby improving linguistic quality reduces the false positive rate by nearly 50%. Which means the system invariably ends up being most suspicious of the students who are trying hardest.

In a school with a genuinely international student body, this is not a distant statistical concern. It depicts students sitting in classrooms right now, submitting work in good faith, only to be met by an algorithm that treats their authentic human effort as evidence of fraud. It turns up as a structural bias embedded in the detection algorithm. We can not accept that.

#5

It is slowing us down.

This may be the most important reason of all. Detection isn’t just failing; it is delaying the shift that we urgently need to make towards the authentication of student work.

While it exists, schools running on the theatre of detection are delaying the harder work. If we turn the detectors off, the absence of an alternative will mean we have to look at things differently.

We need to shift towards authentication. The viva voce, the oral defence, the ten-minute conversation at the end of a submission window that tells you more than any rubric ever can. Process trails that make thinking visible over time (such as notes, questions, and dialogue) are all evidence of productive struggle.

Detection looks for clues and signals in the text, whilst authentication looks for clues in the learner.

Authentication also addresses both equity problems in ways that detection cannot. It doesn’t rely on catching something in the text, and it doesn’t run an algorithm over prose. Instead, it relies on knowing the student well enough to ask the right questions. The shift from detection to authentication also reflects a change in what we are saying to students. Detection signals that we do not trust our students, which is exactly the opposite of what we want our relationship with them to be. Authentication, on the other hand, signals that we believe learning has occurred here and that we want to see it.

Detection is now about as useful as asking me to remove my fabric belt to go through the airport scanners. I am not suggesting that authentication is easy work, or that it will not raise questions of teacher workload. It will, because authentication takes time, requires changes to the way we do things, and asks different questions. And change is always painful in schools.

But the alternative to this choice to shift (maintaining the theatre while the students work around us) is not a neutral position. It is also a choice.

Final Word Count

If I have not said it plainly enough, academic integrity matters.

But I don’t think detection can protect it. We need to move on to authentication. It’s different work, with a different purpose, set of outcomes, and set of challenges. But we can’t get to that work whilst we hold on to the belief that detection is the way to safeguard integrity.

Is it time to turn it in?

I think it is.

- OK, it’s also true that some of those who get caught are either naive or deluded, too. ↩︎

- Liang et al. (2023), “GPT detectors are biased against non-native English writers,” Patterns, Cell Press. Available at:https://doi.org/10.1016/j.patter.2023.100779 ↩︎

Discover more from Serendipities

Subscribe to get the latest posts sent to your email.